Program Management Reports

Knowledge is Power

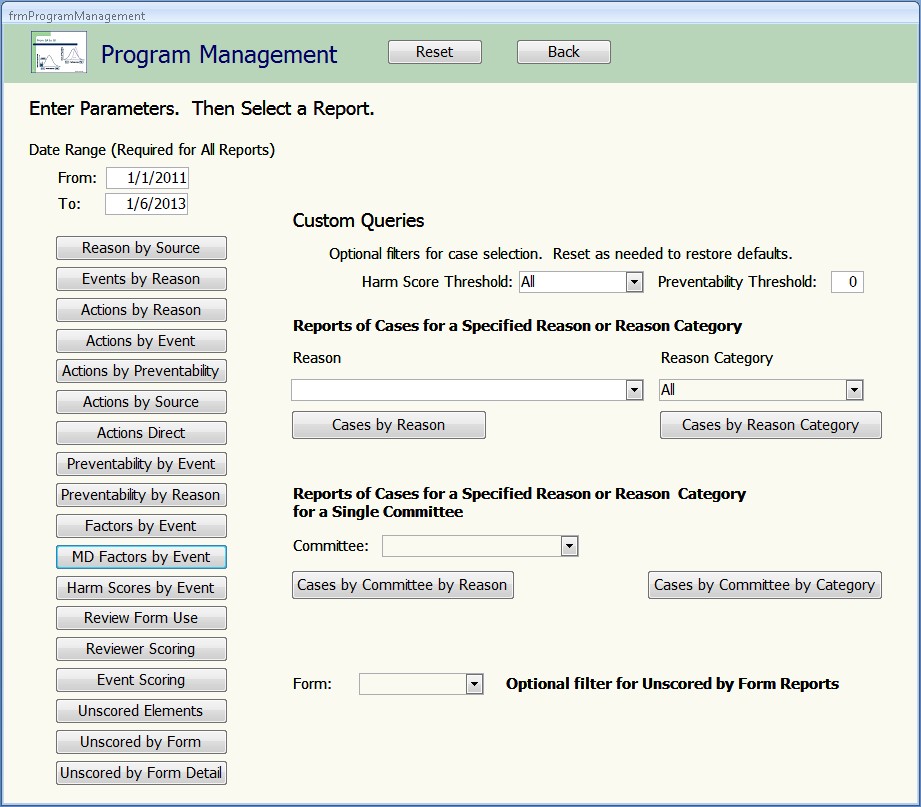

This form groups an important suite of reports that support ongoing peer review program improvement.

The generic screens conventionally used to choose cases for peer review are known to be inefficient in identifying substandard care. There is insufficient data to know their potential value in identifying the learning opportunities which are the grist for the QI model.

The first 10 reports on this list complement one another in providing a picture of how cases are being identified and what actions are resulting from their review. They give the leaders fundamental information to improve case identification strategies and case review process. Several are demonstrated here. They are complemented by the Custom Queries function that enables drill-down analysis of the triggers in use.

The Reviewer Scoring reports reveal bias and inconsistencies among reviewers and across committees. It offers a simple feedback tool to reduce variability on these dimensions and thereby improve clinical performance measurement reliability.